- [2026-06-08] If Old Acquaintance Be Forgot [Fiction]

- [2026-05-29] Longevity tech: in a bad place, with good options

- [2026-05-28] The Nostradamus Fixpoint [Fiction]

- [2026-05-23] Graveyard [Fiction]

- [2026-05-17] My Soul to Keep [Fiction]

- [2026-05-10] Spectral [Fiction]

- [2026-05-03] Odds and Ends [Fiction]

- [2026-04-26] POV [Fiction]

- [2026-04-18] Industrial Secrets [Fiction]

- [2026-04-13] Ablative [Fiction]

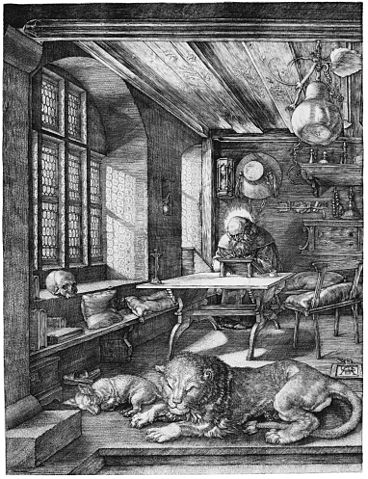

- [2026-04-02] The Patron Saint of Clues [Fiction]

- [2026-03-30] Already Disruptive: Journalism is a high-margin research technology, not a zero-margin content mill

- [2026-03-27] The Quiet Choir [Fiction]

- [2026-03-20] The Emergent Artifact 12 Heist [Fiction]

- [2026-03-16] Making Investing Fit for a Sci-Fi World

- [2026-03-13] The Net Sum of Love [Fiction]

- [2026-03-07] Children Insurance [Fiction]

- [2026-03-01] Interference Pattern [Fiction]

- [2026-02-26] The Mathematics of Change [Fiction]

- [2026-02-16] Runaway Geodesics [Fiction]

- [2026-02-11] The Human Bottleneck

- [2026-02-08] Citadel [Fiction]

- [2026-01-31] Acts of War [Fiction]

- [2026-01-29] Hacking the Hackathon

- [2026-01-24] Seaside Whispers [Fiction]

- [2026-01-24] Against Developer Productivity

- [2026-01-16] Breaking the Smart City Out of its Narcissism

- [2026-01-15] The Final Summation [Fiction]

- [2026-01-14] Affinity [Fiction]

- [2026-01-04] The Post-AI Organization

- [2026-01-03] The ECHELON Lacuna [Fiction]

- [2025-12-27] Accretion [Fiction]

- [2025-12-20] The Burning Church [Fiction]

- [2025-12-15] Invisible Art [Fiction]

- [2025-12-10] The Book of All Names (repost) [Fiction]

- [2025-12-07] Progression [Fiction]

- [2025-12-07] After the Bubble, the Dream Gap

- [2025-11-30] Ever-fixed [Fiction]

- [2025-11-27] The file on your local drive is the ultimate integration API

- [2025-11-23] Collateral Dreams [Fiction]

- [2025-11-13] Deeper than the Skin [Fiction]

- [2025-11-07] Overtime Prophets [Fiction]

- [2025-11-06] Rising on arXiv - 2025-10-31: Altermagnets, Einstein Probe, Cosmic noon [RisingOnArXiv]

- [2025-11-01] The Red Fleet [Fiction]

- [2025-10-25] Works of the Flesh [Fiction]

- [2025-10-25] Rising on arXiv - 2025-10-24: Cherenkov Telescope Array Observatory, Optical interconnects, Abelian anyons [RisingOnArXiv]

- [2025-10-18] Rising on arXiv - 2025-10-17: High Bandwidth Memory, Causal attribution, Primordial non-Gaussianities [RisingOnArXiv]

- [2025-10-18] Recapitulations [Fiction]

- [2025-10-12] Rising on arXiv - 2025-10-10: Lindbladians, ZKPs, Algonauts 2025 Challenge [RisingOnArXiv]

- [2025-10-11] Neonatal [Fiction]

- [2025-10-06] Rising on arXiv - 2025-10-05: ICCV 2025, Fermion sign problem, Genuine multipartite entanglement [RisingOnArXiv]

- [2025-09-28] Technology Transfer [Fiction]

- [2025-09-27] Rising on arXiv - 2025-09-26: Extraterrestrial Intelligence, Uhlmann's Theorem, uMLIPs [RisingOnArXiv]

- [2025-09-21] The Passage [Fiction]

- [2025-09-21] Rising on arXiv - 2025-09-19: GW231123, Optical anisotropy, Quantum-enhanced sensing [RisingOnArXiv]

- [2025-09-13] Rising on arXiv - 2025-09-12: Ultra-high-energy cosmic rays, Large-scale magnetic field, FRW Universe [RisingOnArXiv]

- [2025-09-12] The Test [Fiction]

- [2025-09-08] Hades [Fiction]

- [2025-09-06] Rising on arXiv - 2025-09-05: 3I/ATLAS, Extensive air showers, Secrecy rate [RisingOnArXiv]

- [2025-08-30] Rising on arXiv - 2025-08-29: IceTop, Habitable Worlds Observatory, Phase-field models [RisingOnArXiv]

- [2025-08-30] Electric Prayer [Fiction]

- [2025-08-24] Rising on arXiv - 2025-08-22: Performance Estimation Problem, Surgical decision-making, Preoperative planning [RisingOnArXiv]

- [2025-08-24] Crimson Lines on a Chart [Fiction]

- [2025-08-17] Rising on arXiv - 2025-08-17: Ferrons, Tunable platform, Simple closed curves [RisingOnArXiv]

- [2025-08-15] Silence Bought and Sold [Fiction]

- [2025-08-09] Rising on arXiv - 2025-08-08: DESI DR2, Sub-millimeter accuracy, Inflationary dynamics [RisingOnArXiv]

- [2025-08-08] Notes After the Incident [Fiction]

- [2025-08-03] Rising on arXiv - 2025-08-01: Low-altitude wireless networks (LAWN), Tetrablock, Hybrid metric-Palatini gravity [RisingOnArXiv]

- [2025-08-03] Emotional Warfare [Fiction]

- [2025-07-27] Rising on arXiv - 2025-07-25: Triangle counting, Split conformal prediction, Seyfert galaxy [RisingOnArXiv]

- [2025-07-27] Morning Prayers [Fiction]

- [2025-07-24] Beyond LLMs: Regulatory risks and potential of significantly advanced AI

- [2025-07-22] The Stream [Fiction]

- [2025-07-20] Rising on arXiv - 2025-07-18: principled foundation, quasiparticle picture, content diversity [RisingOnArXiv]

- [2025-07-18] The Snap [Fiction]

- [2025-07-14] Rising on arXiv - 2025-07-11: Multi-target tracking, Spatial audio, Autoregressive modeling [RisingOnArXiv]

- [2025-07-03] Time, summarized

- [2025-06-29] The Fallen [Fiction]

- [2025-06-28] Rising on arXiv - 2025-06-27: RTL generation, Contextual metadata, MAGE [RisingOnArXiv]

- [2025-06-19] Singularity City Revelations [Fiction]

- [2025-06-19] Rising on arXiv - 2025-06-13: Flow prediction, Institutional design, Memory retrieval [RisingOnArXiv]

- [2025-06-14] Ghost Girl [Fiction]

- [2025-06-09] Rising on arXiv - 2025-06-06: MathVision, Weighted projective space, Popularity bias [RisingOnArXiv]

- [2025-06-08] Musica Universalis [Fiction]

- [2025-06-02] Rising on arXiv - 2025-05-30: Claude 3.7 Sonnet, Solar Terrestrial Relations Observatory, Large Deviation Properties, Mu-SHROOM, Open-Source Solver, Humanoid Locomotion, Kling, GUI Agents, Textual Semantics, . Spatial Reasoning [RisingOnArXiv]

- [2025-06-01] A Surfeit of Beauty [Fiction]

- [2025-05-28] Rising on arXiv - a short explainer [RisingOnArXiv]

- [2025-05-28] Rising on arXiv - 2025-05-26: GRPO, Large Reasoning Models, DeepSeek-R1, Verifiable rewards, Small Language Models, SemEval-2025 task, Intermediate reasoning steps, Interpretability, DESI, . Vietnamese [RisingOnArXiv]

- [2025-05-23] Farewell [Fiction]

- [2025-05-16] Nostalgia [Fiction]

- [2025-05-12] The Warrior's Sacrifice [Fiction]

- [2025-05-08] Sloppy choices: the why and then-what of institutional LLM adoption

- [2025-05-04] The Varieties of Religious Expectation [Fiction]

- [2025-04-25] Market Signals [Fiction]

- [2025-04-21] Quick notes on the danger of LLM benchmarks

- [2025-04-17] Ledger of Tomorrows [Fiction]

- [2025-04-14] Almost entirely an outline: The generative AI domain expertise drain and the next divergence

- [2025-04-12] Bluesky Summary 2025-04-04 - 2025-04-12 [Bluesky]

- [2025-04-03] Bluesky Summary 2025-03-31 - 2025-04-03 [Bluesky]

- [2025-03-30] Rebuilding your organization's cognition for the ongoing economic crisis

- [2025-03-30] Bluesky Summary 2025-03-27 - 2025-03-30 [Bluesky]

- [2025-03-26] Bluesky Summary 2025-03-26 - 2025-03-26 [Bluesky]

- [2025-03-22] Convergence [Fiction]

- [2025-03-15] Gödel's Law [Fiction]

- [2025-03-10] The Death Gig [Fiction]

- [2025-02-23] Delivery System [Fiction]

- [2025-02-17] Nightmare Blueprints [Fiction]

- [2025-02-08] On Silence [Fiction]

- [2025-02-01] Mandelbrot City Fragment [Fiction]

- [2025-01-28] Evidence of a Crime [Fiction]

- [2025-01-27] Pastoral [Fiction]

- [2025-01-20] Confession [Fiction]

- [2025-01-12] Scattershot Prayer [Fiction]

- [2025-01-05] Place Settings [Fiction]

- [2024-12-30] The Aeneas Protocol [Fiction]

- [2024-12-22] Whispers [Fiction]

- [2024-12-08] The Library [Fiction]

- [2024-11-30] The Sisters of Eternal Winter [Fiction]

- [2024-11-23] Teachable Moment [Fiction]

- [2024-11-18] The Fourier Protocol [Fiction]

- [2024-11-14] Quick link: Another health crisis we aren't preparing for

- [2024-11-10] Getaway [Fiction]

- [2024-11-04] Apocalyptic Tableau [Fiction]

- [2024-10-27] Quick link: The variable is not the thing

- [2024-10-26] Things Kept [Fiction]

- [2024-10-22] Quick link: It's not tokens all the way down

- [2024-10-21] Quick link: The outlook is: Still Not Great

- [2024-10-19] The Nine Trillion Parameters of God [Fiction]

- [2024-10-14] The Apple [Fiction]

- [2024-10-10] Quick link: Using bots to bypass bots that will hire people...

- [2024-10-09] Automate with Care

- [2024-10-06] Genius [Fiction]

- [2024-10-01] Notas para una historia parcial de los generadores / Notes for a partial history of generators [Borgesiana]

- [2024-09-28] A Human Universe [Fiction]

- [2024-09-22] Change of Heart [Fiction]

- [2024-09-13] Fallen [Fiction]

- [2024-09-08] Mary of the Cold [Fiction]

- [2024-09-08] How to reverse-engineer your organization for fun and profit

- [2024-09-02] A Man of His Word [Fiction]

- [2024-08-26] Narrative Framing [Fiction]

- [2024-08-21] Quick link: How true industrial AI will look like

- [2024-08-18] A Murder of Crows [Fiction]

- [2024-08-12] A short note on Nvidia's strategic luck and the option value of being the best

- [2024-08-09] Targeted [Fiction]

- [2024-08-05] Quick link: The most interesting medal was won in the other Olympics

- [2024-08-05] A very short note: AI isn't $AI - don't sweat the market too much

- [2024-08-04] AI Alignment [Fiction]

- [2024-07-30] On those who listen to silence [Fiction]

- [2024-07-29] The predictable disaster of "predicting" crime

- [2024-07-29] Quick link: What moves the movers?

- [2024-07-22] Quick link: Lovecraftian data sets

- [2024-07-21] Frontier Work [Fiction]

- [2024-07-17] The Kids Aren't Alright (but that doesn't mean they aren't correct)

- [2024-07-17] Fixing biases in decision-making won't get you far enough

- [2024-07-14] The Metaphysics of the Fly [Fiction]

- [2024-07-08] "Transference" [Fiction]

- [2024-07-02] Q&A [Fiction]

- [2024-06-26] Quick link: Maybe the declining gender gap isn't about gender

- [2024-06-23] The Train [Fiction]

- [2024-06-22] The quality of generative AI is only half the story

- [2024-06-16] A quick note on the longevity market for the rich

- [2024-06-15] Breakfast Time [Fiction]

- [2024-06-14] Three ways to sabotage a company using AI

- [2024-06-06] Paying for your own commodification

- [2024-06-06] Money is the Utility Function: Thinking about Return on AI

- [2024-06-03] On the Soul's Strength [Fiction]

- [2024-06-02] Uniform [Fiction]

- [2024-05-25] The Pygmalion Maneuver [Fiction]

- [2024-05-20] Eternal [Fiction]

- [2024-05-11] Employee Survey [Fiction]

- [2024-05-05] Into the Waveless Sea [Fiction]

- [2024-05-04] LLMs are infrastructure - but so are some of their outputs!

- [2024-04-29] You're underreacting to climate change

- [2024-04-26] Further Varieties of Religious Experience [Fiction]

- [2024-04-14] The Siege [Fiction]

- [2024-04-14] Quick link: It turns out having a better opponent does help you get better

- [2024-04-13] How Copilot will lead to hiring more developers, not less

- [2024-04-12] All-in Ante [Fiction]

- [2024-04-06] AI and the Complexity of Loss

- [2024-03-30] The Spirit [Fiction]

- [2024-03-24] A Private Deal [Fiction]

- [2024-03-23] Quick link: A mathematician on AI for mathematicians

- [2024-03-22] What AI designers can learn from Dracula

- [2024-03-16] Auto-summarized Entries from a Personal Journal of Applied Artificial Intelligence in Psychological #Self-Care [Fiction]

- [2024-03-15] Quick Link: "The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits"

- [2024-03-09] Whispered Dreams [Fiction]

- [2024-03-04] A Hole in the World [Fiction]

- [2024-02-25] In the waiting room at Avalon [Fiction]

- [2024-02-22] The Scaffolding [Fiction]

- [2024-02-21] After Trolls, Elves. Or not.

- [2024-02-16] How to use AI to design efficient teams

- [2024-02-10] The Garden of Unsilvered Mirrors [Fiction]

- [2024-02-06] Stochastic [Fiction]

- [2024-02-05] Quick link: AI assisting journalists, but to do what?

- [2024-02-03] The AI role almost nobody talks about

- [2024-02-02] Organizational hollowing and the lethal 80% AI

- [2024-01-30] New short story: "Sanity Economics" [Fiction]

- [2024-01-30] Ask not what AI engineering can do for aesthetics, but what aesthetics can do for AI engineering

- [2024-01-21] New short story: "Generation Gap" [Fiction]

- [2024-01-18] Quick link: BREAKING NEWS: Cheap rewrites crowd out expensive research

- [2024-01-15] The most useful phrase about AI you will read this month

- [2024-01-13] New short story: "When he himself might his quietus make" [Fiction]

- [2024-01-11] The goal of data analysis is to kill time

- [2024-01-06] New short story: "The Traveler and the Goddess" [Fiction]

- [2023-12-31] New short story: "Elective" [Fiction]

- [2023-12-24] New short story: "The Secret Conclave of 2039" [Fiction]

- [2023-12-20] New short story: "A Voice in the Crowd" [Fiction]

- [2023-12-18] Quick link: Manufacturing delaborization and development strategy (with notes on Argentina)

- [2023-12-12] New short story: "River Crossings" [Fiction]

- [2023-12-03] New short story: "The Festivals of Winter" [Fiction]

- [2023-11-27] Informal paper: "Technology portfolio modeling, generative AI, and optimal fund design"

- [2023-11-25] Quick link: Burning the world and/or USD 400 billion a year

- [2023-11-25] New short story: "Spoils of War" [Fiction]

- [2023-11-23] The one AI-related existential risk I'm worried about

- [2023-11-22] Not a story but fifty-three: "Viral Fixpoint - The Adversarial Metanoia archives (2019-2020)" [Fiction]

- [2023-11-18] New short story: "Revelation Engineering" [Fiction]

- [2023-11-17] Quick link: When it comes to causal knowledge, every little bit (of lowered entropy) helps

- [2023-11-12] New short story: "Offerings" [Fiction]

- [2023-11-12] And now for something different: architecture and the Metaverse

- [2023-11-10] Quick link: First data points on the impact of ChatGPT on employment

- [2023-11-08] Using protein language models to accelerate their artificial evolution works; the interesting part is why.

- [2023-11-06] Quick link: Knowing what your algorithm thinks it knows

- [2023-11-05] New short story: "Truth-seeking Sessions" [Fiction]

- [2023-11-05] AI hiring filters don't optimize what you think they do

- [2023-10-30] New short story: "Deep Inside the Glitch Forest" [Fiction]

- [2023-10-29] The expertise architecture of journalism, and how to use AI to kill it faster

- [2023-10-29] Quick link: embarrassingly elegant AI+expert optimization teams

- [2023-10-22] New short story: "Of Children and Other Summonses" [Fiction]

- [2023-10-18] New short story: "Professional Integrity" [Fiction]

- [2023-10-18] A quick reminder that deep learning is a hack

- [2023-10-07] New short story: "NPC Encounters" [Fiction]

- [2023-10-02] New short story: "The Former Frontier" [Fiction]

- [2023-09-28] A couple of useful papers on modeling and measuring AIs and human minds

- [2023-09-27] The Spy Who Knew Nothing

- [2023-09-26] New short story: "Bedroom Memories" [Fiction]

- [2023-09-18] Quick Link: Surveillance AI as an export

- [2023-09-17] New short story: "Ecological Succession" [Fiction]

- [2023-09-10] New short story: "The Thin Dotted Line" [Fiction]

- [2023-09-04] New short story: "Battlefield Conversion" [Fiction]

- [2023-09-01] The distracted geopolitics of stochastic parrots

- [2023-08-30] New short story: "Illusion of Choice" [Fiction]

- [2023-08-20] New short story: "Restorative Justice" [Fiction]

- [2023-08-18] Quick link: "Genesis-DB: a database for autonomous laboratory systems"

- [2023-08-16] New short story: "Solution Space" [Fiction]

- [2023-08-06] New short story: "Romantic Chemistry" [Fiction]

- [2023-08-05] Quick link: just because you're superhuman it doesn't mean you get to be inconsistent

- [2023-08-03] Neurotech-proofing Your Product Design: A First Step

- [2023-08-02] New short story: "The Computational Complexity of Love" [Fiction]

- [2023-07-25] New short story: "A Solitary Death" [Fiction]

- [2023-07-24] The LLM revolution is going great

- [2023-07-21] When you have a generic content generator, everything looks generic content

- [2023-07-18] The problem isn't intelligence being artificial, it's intelligence being uniform

- [2023-07-15] New short story: "Bad Feelings" [Fiction]

- [2023-07-10] New short story: "How to survive visiting a dead town" [Fiction]

- [2023-07-04] Wavelights and AIs as strategic amplifiers

- [2023-07-02] NPS, I'm looking at you...

- [2023-07-01] New short story: "A Distant Home" [Fiction]

- [2023-06-27] New short story: "A Sense of Community" [Fiction]

- [2023-06-20] New short story: "Emotional Surplus" [Fiction]

- [2023-06-10] New short story: "The Knife, the Catacomb, the Crime, and the Saint" [Fiction]

- [2023-06-08] To get a glimpse of the future, read the PhD theses of smart people

- [2023-06-06] Large language models are stranger than most people think

- [2023-06-04] Very good advice from very smart people on experiment design

- [2023-06-03] New short story: "Lightly Censored Letter Home from a Ghoul" [Fiction]

- [2023-05-28] New short story: "The Third Inheritance" [Fiction]

- [2023-05-21] Quick link: de novo antibody design with generative AI

- [2023-05-21] New short story: "The Commute" [Fiction]

- [2023-05-15] Quick link: Configurable apps for grown-ups

- [2023-05-14] Quick link: How efficient is public infrastructure investment?

- [2023-05-14] New short story: "Upon the Place Beneath" [Fiction]

- [2023-05-09] AI and your career

- [2023-05-08] New short story: "How to hunt monsters" [Fiction]

- [2023-05-02] How to improve your information diet in a generative AI world

- [2023-04-29] New short story: "Iterative Prototyping" [Fiction]

- [2023-04-22] The World Bank on the energy transition in low- and middle-income countries: to solve the lack of sandwiches, get bread and ham

- [2023-04-22] New short story: "Forensic Analysis" [Fiction]

- [2023-04-17] New short story: "Educational Software" [Fiction]

- [2023-04-14] Enshittification, accounting... and email?

- [2023-04-11] The AI killer app isn't answers, it's questions

- [2023-04-10] New short story: "The Plot" [Fiction]

- [2023-04-05] What I mean when I say "written without an AI"

- [2023-04-02] New short story: "Night and Rain were the Local Optimum" [Fiction]

- [2023-03-30] The new scarcity value of creative theory

- [2023-03-26] New short story: "A Sense of Identity" [Fiction]

- [2023-03-23] An SteampunkGPT thought experiment

- [2023-03-22] AI, negative externalities, and using economics to stop the Office Apocalypse

- [2023-03-19] New short story: "Criminogenic Tech" [Fiction]

- [2023-03-13] Innovation is what you build when everybody else is nervous [Fiction]

- [2023-03-12] New short story: "Targeting Protocols" [Fiction]

- [2023-03-04] New short story: "Small Transactions" [Fiction]

- [2023-03-03] How to think about that Google "mind reading with Stable Diffusion" paper

- [2023-03-01] New short story: "A Catalog of Invisible Things" [Fiction]

- [2023-02-22] New short story: "Extraneous" [Fiction]

- [2023-02-15] New short story: "A Map of the Sea" [Fiction]

- [2023-02-14] Time for a post-Musk public understanding of "the algorithm"

- [2023-02-10] New short story: "A Willingness to Help" [Fiction]

- [2023-02-08] Of Chatbots, Love, Money, and Sherlock Holmes

- [2023-02-01] The AI CFO

- [2023-01-31] New short story: "To Watch with Loving Eyes" [Fiction]

- [2023-01-24] ChatGPT isn't smart, your tests are stupid

- [2023-01-21] New short story: "Noir" [Fiction]

- [2023-01-19] AI and the macroeconomics of brains

- [2023-01-14] New short story: "The Cartographer" [Fiction]

- [2023-01-10] Relax: ChatGPT mostly breaks the parts of the Internet that are already broken

- [2023-01-07] New short story: "Therapeutic" [Fiction]

- [2023-01-05] A short timeline of the future of BCIs

- [2023-01-03] Ask not what AI can do for HR, but what HR will have to do for AI

- [2022-12-31] New short story: "The Perhaps Vendetta" [Fiction]

- [2022-12-24] "X for the Unknown"

- [2022-12-22] Don't be afraid of butterflies

- [2022-12-20] AIs as societal zero-day exploits

- [2022-12-19] Programming is (also) a liberal art

- [2022-12-19] New short story: "The Summation" [Fiction]

- [2022-12-16] Crypto was a poetical triumph... and/or epistemic nihilism

- [2022-12-15] Web 3 is older than the century

- [2022-12-10] New short story: "Rules of the Farm" [Fiction]

- [2022-12-05] New short story: "After the War" [Fiction]

- [2022-11-26] New short story: "The Fractal Order" [Fiction]

- [2022-11-20] New short story: "Alchemical Diagrams" [Fiction]

- [2022-11-12] New short story: "The Wittgenstein Condition" [Fiction]

- [2022-11-11] Cognitive culture, the tech market collapse, and what to focus 2023 startups on

- [2022-11-07] The AI biotech revolution won't be the one you think

- [2022-11-07] New short story: "On the Church of the Sea" [Fiction]

- [2022-11-07] How to defeat a superhuman AI

- [2022-10-31] New short story: "Self-criticism" [Fiction]

- [2022-10-23] New short story: "After the Rally at VR R'lyeh" [Fiction]

- [2022-10-18] New short story: "The World-Stealer" [Fiction]

- [2022-10-08] New short story: "What Gold Dreams Of" [Fiction]

- [2022-10-06] Book chapter for "Argentina en Internet"

- [2022-10-04] New short story: "Game Mechanics" [Fiction]

- [2022-09-24] New short story: "Molecular Sacrament" [Fiction]

- [2022-09-17] New short story: "The Family Crypt" [Fiction]

- [2022-09-12] New short story: "To be Known by the Walls" [Fiction]

- [2022-09-03] New short story: "New Foundations" [Fiction]

- [2022-08-27] New short story: "The Defeated" [Fiction]

- [2022-08-20] New short story: "Three Tales and a Memo" [Fiction]

- [2022-08-16] On privacy as a wu wei feature

- [2022-08-14] New short story: "Sensory Deprivation" [Fiction]

- [2022-08-08] A throwaway note on haunted houses, websites, and other architectural monstrosities

- [2022-08-05] A throwaway note on the rhizome of all evil

- [2022-08-04] New short story: "Hieratic" [Fiction]

- [2022-08-02] A throwaway note on learning myopia and strategic fear [Fiction]

- [2022-07-31] New short story: "In Your Head" [Fiction]

- [2022-07-28] A throwaway note on the archetypal impact of quantum computing

- [2022-07-23] New short story: "The Holy Assassin" [Fiction]

- [2022-07-14] New short story: "A prayer for forgiveness" [Fiction]

- [2022-07-14] A throwaway note on haunted algorithms

- [2022-07-13] A throwaway note on the aesthetics of AI

- [2022-07-13] A throwaway note on Deleuze-Guattari and crappy social networks

- [2022-07-09] New short story: "The Bloody Tree" [Fiction]

- [2022-07-02] New short story: "Legal Magic" [Fiction]

- [2022-06-30] Rethinking the startup for a world of expensive money and cheap computation

- [2022-06-25] New short story: "Procedural" [Fiction]

- [2022-06-19] New short story: "Faster and Deeper than the Eye" [Fiction]

- [2022-06-11] Unstarted Revolutions: How to think about what AI hasn't changed (yet)

- [2022-06-11] New short story: "The Future of Work" [Fiction]

- [2022-06-04] New short story: "Rumor" [Fiction]

- [2022-05-28] New short story: "A Theology of the Depths" [Fiction]

- [2022-05-28] Decolonizing the Smart City for Fun and Profit [Fiction]

- [2022-05-24] Financial innovation after the Fintechdämmerung [Fiction]

- [2022-05-22] New short story: "White Collar Work at the Central Node of All Realities" [Fiction]

- [2022-05-14] New short story: "The Light After" [Fiction]

- [2022-05-08] New short story: "The Art of the Balanced Party" [Fiction]

- [2022-05-06] On WIRED: "DALL-E 2 Creates Incredible Images—and Biased Ones You Don’t See"

- [2022-04-28] New short story: "Monstrous Choices" [Fiction]

- [2022-04-23] New short story: "The Singularity Probe" [Fiction]

- [2022-04-16] What does DALL-E 2 know that it can't show us?

- [2022-04-16] New short story: "The Black Car" [Fiction]

- [2022-04-14] My podcast with Get Your AI On!

- [2022-04-10] Why warning about technical debt only makes it worse

- [2022-04-09] New short story: "Iterative Design" [Fiction]

- [2022-04-08] DALL-E 2 is out (although not open)

- [2022-04-02] New short story: "The Story in the Blood" [Fiction]

- [2022-03-26] New short story: "Synapses Firing Like Shards of Light" [Fiction]

- [2022-03-26] Macho Cyberwarfare and the Long Game

- [2022-03-20] New short story: "The Stone Mind" [Fiction]

- [2022-03-13] New short story: "The Babel Journal" [Fiction]

- [2022-03-10] PSG Startups and the Piles-of-Money Trap

- [2022-03-05] New short story: "Adversarial Training" [Fiction]

- [2022-02-26] New short story: "A Cartography of Sighs" [Fiction]

- [2022-02-19] New short story: "A Room with a Voice" [Fiction]

- [2022-02-16] Artificial Intelligence without the Data

- [2022-02-14] New short story: "Unplugged" [Fiction]

- [2022-02-10] New short story: "The Goddess of War" [Fiction]

- [2022-02-06] We've got our climate change priorities backwards

- [2022-01-31] Sustainability as Collective Superintelligence [Fiction]

- [2022-01-31] New short story: "The Job of Your Dreams" [Fiction]

- [2022-01-24] New short story: "The Time Machine Engineering War" [Fiction]

- [2022-01-15] Strategies for the NFT economy from the ground up

- [2022-01-15] New short story: "Cryptogothic" [Fiction]

- [2022-01-12] The banality of coup prediction algorithms, and the possible political uselessness of AI [Fiction]

- [2022-01-09] What comes next in the art-finance nexus

- [2022-01-08] New short story: "New Life" [Fiction]

- [2022-01-04] New short story: "The Five-Second Gap" [Fiction]

- [2021-12-29] Let's not build a Neuro-Clearview AI, please

- [2021-12-27] When good AI ethics is just good AI engineering

- [2021-12-25] New short story: "A Crisper Fairy Tale" [Fiction]

- [2021-12-20] New short story: "Silent Whispers" [Fiction]

- [2021-12-18] The Metaverse as Second-Rate Prophecy

- [2021-12-11] New short story: "Reality v2" [Fiction]

- [2021-12-08] New short story: "The Writers' Room" [Fiction]

- [2021-11-28] What’s new in News – Nov 21, 2021 to Nov 27, 2021

- [2021-11-28] Seeing like the market – Nov 28, 2021

- [2021-11-27] Two hundred words on AI as a delaying factor in pharmaceutical innovation

- [2021-11-27] New short story: "Level Up" [Fiction]

- [2021-11-22] Reality leaks, and the Metaverse will be worse

- [2021-11-21] What’s new in News – Nov 14, 2021 to Nov 20, 2021

- [2021-11-21] Seeing like the market – Nov 21, 2021

- [2021-11-21] New short story: "Metaverse Blues" [Fiction]

- [2021-11-16] Updated the map of TED talks

- [2021-11-14] What’s new in News – Nov 7, 2021 to Nov 13, 2021

- [2021-11-14] Seeing like the market – Nov 14, 2021

- [2021-11-11] New short story: "The Rights to the Rose" [Fiction]

- [2021-11-07] Seeing like the market – Nov 7, 2021

- [2021-11-06] New short story: "Ghost Hunters" [Fiction]

- [2021-11-01] Update to the COVID-19 Country Response Scores

- [2021-10-31] What’s new in News – Oct 24, 2021 to Oct 30, 2021

- [2021-10-31] Seeing like the market - Oct 31, 2021

- [2021-10-30] New short story: "Asylum HQ" [Fiction]

- [2021-10-24] What’s new in News – Oct 17, 2021 to Oct 23, 2021

- [2021-10-24] Seeing like the market – Oct 24, 2021

- [2021-10-24] New short story: "Orbiter" [Fiction]

- [2021-10-22] Decentralized Medicine (DeMed): how to survive as a doctor and thrive as a patient in a world of ubiquitous AI

- [2021-10-20] The three arms of the crypto galaxy [Fiction]

- [2021-10-19] On The Economics of Crappy AI

- [2021-10-17] What’s new in News – Oct 10, 2021 to Oct 16, 2021

- [2021-10-17] Seeing like the market – Oct 17, 2021

- [2021-10-16] New short story: "On the Ghosts of Former Lovers" [Fiction]

- [2021-10-15] Updated the map of TED talks

- [2021-10-10] What’s new in News – Oct 3, 2021 to Oct 9, 2021

- [2021-10-10] Seeing like the market – Oct 10, 2021

- [2021-10-09] New short story: "Primus Inter Pares" [Fiction]

- [2021-10-03] What’s new in News – Sep 26, 2021 to Oct 2, 2021

- [2021-10-03] Seeing like the market – Oct 3, 2021

- [2021-10-02] New short story: "The Strangers" [Fiction]

- [2021-10-01] Update to the COVID-19 Country Response Scores

- [2021-09-30] There's no such thing as an outlier...

- [2021-09-29] Exactamente cuatro frases sobre la política energética argentina

- [2021-09-26] What’s new in News – Sep 19, 2021 to Sep 25, 2021

- [2021-09-26] Seeing like the market - Sep 26, 2021

- [2021-09-25] Two hundred words on the role of self-indulgence in a career in tech

- [2021-09-25] New short story: "The Camelot Daily" [Fiction]

- [2021-09-19] What’s new in News – Sep 12, 2021 to Sep 18, 2021

- [2021-09-19] Seeing like the market – Sep 19, 2021

- [2021-09-19] New short story: "Inside the Blazing Forest" [Fiction]

- [2021-09-16] TED World updated!

- [2021-09-12] What’s new in News – Sep 5, 2021 to Sep 11, 2021

- [2021-09-12] Seeing like the market - Sep 12, 2021

- [2021-09-12] New short story: "Human Supervision" [Fiction]

- [2021-09-05] What’s new in News – Aug 29, 2021 to Sep 4, 2021

- [2021-09-05] Seeing Like the Market – Sep 5, 2021

- [2021-09-04] New short story: "The GPT-5 Revelation" [Fiction]

- [2021-09-01] Update to the COVID-19 Country Response Scores

- [2021-08-31] Two hundred words on the paradox of AI job monitoring

- [2021-08-29] What’s new in News – Aug 22, 2021 to Aug 28, 2021

- [2021-08-29] Seeing Like the Market – Aug 29, 2021

- [2021-08-28] Two hundred words on what elite football tells us about organizational competitiveness (and Afghanistan)

- [2021-08-28] New short story: "Gearhead" [Fiction]

- [2021-08-25] Two hundred words on the problems of insuring self-driving cars

- [2021-08-22] Words will not stay in place: tracking vocabulary micro-shifts

- [2021-08-22] What’s new in News – Aug 15, 2021 to Aug 21, 2021

- [2021-08-22] Seeing Like the Market – Aug 22, 2021

- [2021-08-22] New short story: "On overstepping the modesty of nature" [Fiction]

- [2021-08-15] What’s new in News – Aug 8, 2021 to Aug 14, 2021

- [2021-08-15] Seeing Like the Market – Aug 15, 2021

- [2021-08-15] New short story: "Enemy AI" [Fiction]

- [2021-08-09] New short story: "The Six Sigma Saint" [Fiction]

- [2021-08-08] What’s new in News – Aug 1, 2021 to Aug 7, 2021

- [2021-08-08] Seeing Like the Market – Aug 8, 2021

- [2021-08-07] TED World updated!

- [2021-08-01] What’s new in News – Jul 25, 2021 to Jul 31, 2021

- [2021-08-01] Update to the COVID-19 Country Response Scores

- [2021-08-01] Seeing Like the Market – Aug 1, 2021

- [2021-07-28] A Map of TED World (not affiliated to TED)

- [2021-07-25] What’s new in News – Jul 18, 2021 to Jul 24, 2021

- [2021-07-25] Seeing Like the Market – Jul 25, 2021

- [2021-07-25] New short story: "Risk Control" [Fiction]

- [2021-07-18] What’s new in News – Jul 11, 2021 to Jul 17, 2021

- [2021-07-18] Seeing Like the Market – Jul 18, 2021

- [2021-07-17] New short story: "Provenance" [Fiction]

- [2021-07-11] What’s new in News – Jul 4, 2021 to Jul 10, 2021

- [2021-07-11] Seeing Like the Market – Jul 11, 2021

- [2021-07-10] New short story: "Deep Space Travel Journal from New Jersey" [Fiction]

- [2021-07-07] Secular trends and information shocks: telling a rocket from an NFT

- [2021-07-04] What’s new in News – Jun 27, 2021 to Jul 3, 2021

- [2021-07-04] Seeing Like the Market – Jul 4, 2021

- [2021-07-04] New short story: "The Call of the Stars" [Fiction]

- [2021-07-03] Update to the COVID-19 Country Response Scores

- [2021-06-27] What’s new in News – Jun 20, 2021 to Jun 26, 2021

- [2021-06-27] Seeing Like the Market – Jun 27, 2021

- [2021-06-27] New short story: "Poisoners' Gold" [Fiction]

- [2021-06-20] What’s new in News – Jun 13, 2021 to Jun 19, 2021

- [2021-06-20] Seeing Like the Market – Jun 20, 2021

- [2021-06-20] New short story: "The Black Blight of Mars" [Fiction]

- [2021-06-13] What's new in News - Jun 6, 2021 to Jun 12, 2021

- [2021-06-13] Seeing Like the Market – Jun 13, 2021

- [2021-06-13] New short story: "The Flaw" [Fiction]

- [2021-06-07] What’s new in News – May 29, 2021 to Jun 6, 2021

- [2021-06-07] Seeing Like the Market – Jun 6, 2021

- [2021-06-06] New short story: "Algorithmic Trades" [Fiction]

- [2021-06-03] Introducing the Investment Climatology Project

- [2021-06-01] Update to the COVID-19 Country Response Scores

- [2021-05-30] What’s new in News – May 23, 2021 to May 29, 2021

- [2021-05-30] Seeing Like the Market – May 30, 2021

- [2021-05-30] New short story: "The Corrected Stuff" [Fiction]

- [2021-05-23] What’s new in News – May 16, 2021 to May 22, 2021

- [2021-05-23] Seeing Like the Market – May 23, 2021

- [2021-05-22] New short story: "The Book of All Names" [Fiction]

- [2021-05-22] Ficción: "El Libro de Todos los Nombres"

- [2021-05-16] What’s new in News – May 9, 2021 to May 15, 2021

- [2021-05-16] Seeing Like the Market – May 16, 2021

- [2021-05-15] New short story: "Zombie Movies" [Fiction]

- [2021-05-13] Seeing the world like a container

- [2021-05-09] What’s new in News – May 2, 2021 to May 8, 2021

- [2021-05-09] Seeing Like the Market – May 9, 2021

- [2021-05-08] New short story: "Rights Management" [Fiction]

- [2021-05-03] New short story: "Basketball IQ" [Fiction]

- [2021-05-02] What's new in News - Apr 25, 2021 to May 1, 2021

- [2021-05-02] Seeing Like the Market - May 2, 2021

- [2021-04-25] What's new in News - Apr 18 to Apr 24

- [2021-04-25] Update to the COVID-19 Country Response Scores

- [2021-04-25] Seeing Like the Market - Apr 25, 2021

- [2021-04-24] New short story: "Fireside Chats" [Fiction]

- [2021-04-18] What's New in News - Apr 11, 2021 to Apr 17, 2021

- [2021-04-18] Seeing Like the Market - Apr 18, 2021

- [2021-04-17] New short story: "The Tiresian Mind-Builders" [Fiction]

- [2021-04-11] What's New in News - Apr 4, 2021 to Apr 10, 2021

- [2021-04-11] Seeing Like the Market - Apr 11, 2021

- [2021-04-10] New short story: "The Angels of Maybe" [Fiction]

- [2021-04-05] New short story: "The Game of Life" [Fiction]

- [2021-04-04] What's New in News - Mar 28, 2021 to Apr 3, 2021

- [2021-04-04] Seeing Like the Market - Apr 4, 2021

- [2021-04-01] Update to the COVID-19 Country Response Scores

- [2021-03-28] What’s New in News – Mar 14, 2021 to Mar 20, 2021

- [2021-03-28] Seeing Like the Market - Mar 28, 2021

- [2021-03-27] New short story: "The Secrecy of Need" [Fiction]

- [2021-03-26] Apache (van Gogh, 1910)

- [2021-03-21] What's New in News - Mar 14, 2021 to Mar 20, 2021

- [2021-03-21] Seeing Like the Market - Mar 21, 2021

- [2021-03-20] New short story: "Tale of the One Waiting" [Fiction]

- [2021-03-15] What's New in News - Mar 7, 2021 to Mar 13, 2021

- [2021-03-15] Seeing Like the Market - Mar 15, 2021

- [2021-03-12] New short story: "Theseus, the Minotaur, and the Labyrinth" [Fiction]

- [2021-03-07] What's New in News - Feb 28, 2021 to Mar 6, 2021

- [2021-03-07] Seeing Like the Market - Mar 7, 2021

- [2021-03-01] Update to the COVID-19 Country Response Scores

- [2021-02-28] What's New in News - Feb 21, 2021 to Feb 27, 2021

- [2021-02-28] Seeing Like the Market - Feb 28, 2021

- [2021-02-22] What's New in News - Feb 14, 2021 to Feb 20, 2021

- [2021-02-22] Seeing Like the Market - Feb 21, 2021

- [2021-02-22] New short story: "The Rose Garden" [Fiction]

- [2021-02-14] What's New in News - Feb 7, 2021 to Feb 13, 2021

- [2021-02-14] Seeing Like the Market - Feb 14, 2021

- [2021-02-14] New short story: "Sanity Check" [Fiction]

- [2021-02-07] What's New in News - Jan 31, 2021 to Feb 6, 2021

- [2021-02-07] Seeing Like the Market - Feb 7, 2021

- [2021-02-06] New short story: "Red Team/Green Team" [Fiction]

- [2021-02-05] Seeing like the market

- [2021-02-02] Update to the COVID-19 Country Response Scores

- [2021-01-31] What's New in News - Jan 24, 2021 to Jan 30, 2021

- [2021-01-30] New short story: "The Pale Rider" [Fiction]

- [2021-01-24] What's New in News - Jan 17, 2021 to Jan 23, 2021

- [2021-01-23] New short story: "The Genius Factory" [Fiction]

- [2021-01-17] What's New in News - Jan 10, 2021 to Jan 16, 2021

- [2021-01-17] New short story: "A Tale to Remember" [Fiction]

- [2021-01-14] Comparing the effectiveness of country-level responses to COVID-19

- [2021-01-11] What's New in News - Jan 3, 2021 to Jan 9, 2021

- [2021-01-10] New short story: "The Contract" [Fiction]

- [2021-01-05] New short story: "Half a History of the Second Singularity" [Fiction]

- [2021-01-02] Finding what's truly new in the news

- [2020-12-28] New short story: "The Project Manager" [Fiction]

- [2020-12-23] New short story: "Freedom at the Speed of Code" [Fiction]

- [2020-12-18] Dreaming cars with AIs

- [2020-12-15] New short story: "Those Left Behind" [Fiction]

- [2020-12-05] New short story: The Language of Love [Fiction]

- [2020-11-28] New short story: The Déjà vu Shield [Fiction]

- [2020-11-28] Chaotic but not complex: over(?)-simplifying recent Argentinean economic history

- [2020-11-23] New short story: The God-Builders [Fiction]

- [2020-11-15] New short story: The Still Wheel [Fiction]

- [2020-11-08] New short story: The Copenhagen Murders [Fiction]

- [2020-11-01] New short story: In the Negative Space of Light [Fiction]

- [2020-10-30] Short story: Years Like Smoke [Fiction]

- [2020-10-30] Short story: The Rumor of Dragons [Fiction]

- [2020-10-30] Short story: The Garden Gambit [Fiction]

- [2020-10-30] Short story: Short Guide to the Museum of Simulated Societies [Fiction]

- [2020-09-28] New short story: The Zoom Journals [Fiction]

- [2020-09-25] New short story: The Friendly Buildings [Fiction]

- [2020-09-14] New short story: The barista who could disarm nuclear bombs [Fiction]

- [2020-09-05] New short story: The Green Gears [Fiction]

- [2020-08-31] New short story: Gold in the Blood [Fiction]

- [2020-08-29] "Aplanar la curva: la ciencia de datos como articuladora de políticas de Estado"

- [2020-08-23] New short story: The Truth About Clones [Fiction]

- [2020-08-16] New short story: Red Requiem [Fiction]

- [2020-08-13] Beyond Kahneman - How to think very slow

- [2020-08-08] New short story: The Frankenstein Society [Fiction]

- [2020-08-01] New short story: Saints of the Scorched Earth [Fiction]

- [2020-07-27] New short story: The Sum of All Secrets [Fiction]

- [2020-07-02] New short story: The Sleeping Hand [Fiction]

- [2020-06-20] New short story: The Uncounted [Fiction]

- [2020-06-19] The ethical nonsense (and plutocratic convenience) of AI rights

- [2020-06-13] New short story: Dream Job [Fiction]

- [2020-06-06] New short story: The Recursive Grammar of Progress [Fiction]

- [2020-05-31] New short story: Frankenstein's Angel [Fiction]

- [2020-05-24] New short story: Don't Share [Fiction]

- [2020-05-18] New short story: The Leadership Advantage [Fiction]

- [2020-05-11] New short story: Afterlife [Fiction]

- [2020-05-09] New short story: The Long Room [Fiction]

- [2020-04-27] New short story: Guidelines for a Contemporary Vampire Story [Fiction]

- [2020-04-21] New short story: Deep Necromancy for a Dead World [Fiction]

- [2020-04-14] New short story: Sundays at the Unicorn Park [Fiction]

- [2020-03-30] New short story: Three Moments from the History of the Exploration of the Solar System [Fiction]

- [2020-03-26] New short story: The Human Touch [Fiction]

- [2020-03-20] New short story: But Do Mental Asylums Dream of Electric Seas? [Fiction]

- [2020-03-11] New short story: Algorithms of Mercy and Judgement [Fiction]

- [2020-02-29] New short story: Flesh Telemetry [Fiction]

- [2020-02-22] New short story: The Death-Baiters of the Worldwide Catastrophe Circuit [Fiction]

- [2020-02-16] New short story: My Brother the Sensor [Fiction]

- [2020-02-12] New short story: "Forensic Geology" [Fiction]

- [2020-02-01] New short story: "The Ghost Town on the Moon" [Fiction]

- [2020-01-26] New short story: "On Accounting as Slow-motion Necromancy" [Fiction]

- [2020-01-20] New short story: How I Found the Brother I Didn't Know I Had [Fiction]

- [2020-01-14] New short story: "The Spirit of Global Sport" [Fiction]

- [2020-01-06] New short story: "The Overview Effect" [Fiction]

- [2019-12-29] New short story: "Sideways" [Fiction]

- [2019-12-17] New short story: "The Rewilding" [Fiction]

- [2019-12-01] New short story: "Blue Collar" [Fiction]

- [2019-11-18] New short story: "Wordless Prayers of the Small Ones" [Fiction]

- [2019-11-09] New short story: "The Poisonous Song of the Skies" [Fiction]

- [2019-11-03] New short story: "Noontime Dawns" [Fiction]

- [2019-11-02] How the New York Times' coverage changed between September and October 2019

- [2019-10-28] New short story: "War Drone" [Fiction]

- [2019-10-21] New short story: "Blue, Grey, Red" [Fiction]

- [2019-10-18] Mapping the shift in what the NY Times covers

- [2019-10-14] New short story: "The Last Words of the Hero of the Heatwave Wars" [Fiction]

- [2019-10-10] New short story - and short SF newsletter [Fiction]

- [2019-04-27] Smart cities are for refugees

- [2019-04-01] AIs are scapegoats and it's us who end up bleeding

- [2019-02-15] Using outcome-driven AI to avoid fake ROI traps

- [2018-12-10] 2019 Should be the end of Big Attention

- [2018-12-08] AI for Science and Management in 2019: You haven't seen disruption yet

- [2018-11-26] Digital anamnesis and other crimes [short story] [Fiction]

- [2018-09-24] Short story: "On Agile Management as a Mechanism of Social Control" [Fiction]

- [2018-07-26] Mapping the shifting constellations of online debate

- [2018-07-19] The politics of crime-fighting software

- [2018-06-24] Using machine learning to read Sherlock Holmes

- [2018-05-02] The mystical underpinnings of Facebook's anti-fake news algorithms

- [2018-04-19] Superman: The Last Son of Prague

- [2018-04-18] Cyber-weapons as a form of magic, and why we can't code our way to a safer internet

- [2018-04-16] Wages, jobs, and unions in a world with AI (among other things)

- [2018-03-29] Applying machine learning to SEC filings to find anomalous companies

- [2018-03-09] Using Artificial Intelligence to Understand Brands

- [2018-02-19] Flash fic: Posthumous

- [2018-02-15] Urban sensors are for the fog of (climate) war

- [2018-02-10] What's the healthiest city in the US? (and what does that even mean?)

- [2018-01-15] The normalcy of online learning: the more you study, the better you do

- [2018-01-12] The normalcy of online learning: the more you study, the better you do

- [2018-01-08] Deep(ly) Unsettling: The ubiquitous, unspoken business model of AI-induced mental illness

- [2017-12-14] When devops involves monitoring for excess suicides

- [2017-12-13] Short story: Nanobots and the Teenage Brain [Fiction]

- [2017-12-05] Short Story: The Voice of Things [Fiction]

- [2017-12-04] Hegemonía electoral y outliers estadísticos

- [2017-12-01] hackaton electoral

- [2017-11-27] Short story: Soul in the Loop [Fiction]

- [2017-11-11] Reinforcement Learning #1

- [2017-11-09] Short story: Logs from a haunted heart [Fiction]

- [2017-10-29] There are only two emotions in Facebook, and we only use one at a time

- [2017-10-24] What makes an algorithm feminist, and why we need them to be

- [2017-10-22] Financial apps and your brain: the neuroscience behind successful consumer fintech

- [2017-10-21] Fútbol, semántica, y violencia política

- [2017-10-16] Any sufficiently advanced totalitarianism is indistinguishable from Facebook

- [2017-10-04] Banks, Fintech, and First-magician Advantage

- [2017-10-03] Open Source is one of the engines of the world's economy and culture. Its next iteration will be bigger.

- [2017-09-30] Russia 1, Data Science 0

- [2017-09-26] ¿Qué quiere decir que la Argentina crezca 3%?

- [2017-09-18] Tesla (or Google) and the risk of massively distributed physical terrorist attacks

- [2017-09-14] Probability-as-logic vs probability-as-strategy vs probability-as-measure-theory

- [2017-09-10] Original Fic: The Gift of Memory

- [2017-08-24] Big Data, Endless Wars, and Why Gamification (Often) Fails

- [2017-07-26] Article in La Nación

- [2017-07-08] No es la grieta, es el techo

- [2017-07-05] This screen is an attack surface

- [2017-07-05] A simplified nuclear game with Kim Jong-un

- [2017-06-13] Regularization, continuity, and the mystery of generalization in Deep Learning

- [2017-05-26] Short story: Nice girl falls in love with vampire boy. Of course he kills her [Fiction]

- [2017-05-24] Don't worry about opaque algorithms; you already don't know what anything is doing, or why

- [2017-05-16] The insidious not-so-badness of technological underemployment, and why more education and better technology won't help

- [2017-05-04] The case for blockchains as international aid

- [2017-05-04] Short story: The Associate [Fiction]

- [2017-04-28] Statistics, Simians, the Scottish, and Sizing up Soothsayers

- [2017-04-25] The Children of the Dead City

- [2017-04-21] Why the most influential business AIs will look like spellcheckers (and a toy example of how to build one)

- [2017-04-17] Don't blame algorithms for United's (literally) bloody mess

- [2017-03-29] Deep Learning as the apotheosis of Test-Driven Development

- [2017-03-27] "Tactical Awareness" en Español

- [2017-02-28] Short story: The Eater of Silicon Sins [Fiction]

- [2017-02-22] Short story: Dead Man's Trigger [Fiction]

- [2017-01-31] The new (and very old) political responsibility of data scientists

- [2017-01-16] Rush Hour

- [2017-01-12] The Mental Health of Smart Cities

- [2017-01-10] How to be data-driven without data...

- [2017-01-03] In the News that Make me Very Proud department... [Fiction]

- [2017-01-02] Safe Travels

- [2016-12-23] The best political countersurveillance tool is to grow the heck up

- [2016-12-07] The informal sector Singularity

- [2016-10-28] In very nice-slash-surprising news...

- [2016-10-18] For the unexpected innovations, look where you'd rather not

- [2016-10-17] The Differentiable Organization

- [2016-10-14] Yuletide Mythos story (Haven't figured out a title yet)

- [2016-10-11] Cuando subastan tus emociones

- [2016-10-07] When your boss is an algorithm (talk outline)

- [2016-10-03] When the world is the ad

- [2016-09-09] When your world is the ad

- [2016-09-09] (Over)Simplifying Calgary too

- [2016-09-06] (Over)Simplifying Buenos Aires

- [2016-08-05] Comentarios Marajofsky

- [2016-07-29] PROJECT MONTGOMERY

- [2016-07-29] PROJECT CHEETO

- [2016-05-05] The job of the future isn't creating artificial intelligences, but keeping them sane

- [2016-02-17] The Trading Company

- [2016-02-17] Scorched Waters

- [2016-02-17] Phantom Notes

- [2016-02-17] Consider the lilies how they grow

- [2016-02-17] Big House Messiah

- [2016-02-16] Nueva Charla: Aplicaciones comerciales de tecnologías militares

- [2016-02-15] Testament

- [2016-02-15] Sleep Paralysis

- [2016-02-15] Mobile ecosystem

- [2016-02-14] Meat Market

- [2016-02-14] Let me not admit impediments

- [2016-02-06] Inheritance

- [2016-02-05] Something waiting for you

- [2016-02-05] Sic Semper

- [2016-02-05] On the uses of empathy

- [2016-02-04] The Ledger

- [2016-02-04] The Game

- [2016-02-04] Of the Forbidden Fruit of the Tree of Knowledge

- [2016-02-04] Honeypot

- [2016-02-04] Graphe Chrysalis

- [2016-02-04] Fateful

- [2016-01-26] The Builders of Cities

- [2016-01-26] Digital Democracy

- [2016-01-26] Ad Astra

- [2016-01-21] The Lotus Protocol

- [2016-01-20] Motherboard: "Sleep Tech Will Widen the Gap Between the Rich and the Poor"

- [2016-01-20] Motherboard: "Sleep Tech Will Widen the Gap Between the Rich and the Poor"

- [2016-01-16] Voyeur

- [2016-01-16] The Wall

- [2016-01-12] The Cartesian News

- [2016-01-12] A, B, Omega

- [2015-12-31] The Heist

- [2015-12-31] The Black Ships

- [2015-12-31] Last Post

- [2015-12-31] Eudaimonia

- [2015-12-31] Apex Predator

- [2015-12-29] The Knowledge of Self

- [2015-12-29] A Rebellion of Ghosts

- [2015-12-28] The Arsonists

- [2015-12-27] The Thoughts of Crowds

- [2015-12-27] The Smile of a Child

- [2015-12-27] The Mars Pioneers

- [2015-12-27] The Art of Mirroring

- [2015-12-27] Software Archeology

- [2015-12-27] Social Engineering

- [2015-12-27] Lessons

- [2015-12-27] Legacy

- [2015-12-27] For Doesn't Love Conquer Time?

- [2015-12-27] Dangerous Strangers

- [2015-12-27] Bodycam Transcript

- [2015-12-22] Windows to the Soul

- [2015-12-20] Personalized

- [2015-12-20] Panopticon

- [2015-12-19] Crowdsourced

- [2015-12-18] Lachesis

- [2015-12-17] The future of machine learning lies in its (human) past

- [2015-12-17] Habilis

- [2015-12-16] The Companion

- [2015-12-15] The truly dangerous AI gap is the political one

- [2015-12-15] Dirge

- [2015-12-14] Simurgh

- [2015-12-13] The Marriage of Heaven and Hell

- [2015-12-10] The Burroughs Hack

- [2015-12-09] The Monster-Maker's Testament

- [2015-12-08] Siege's End

- [2015-11-23] "Prior art" is just a fancy term for "too slow lawyering up"

- [2015-10-28] The gig economy is the oldest one, and it's always bad news

- [2015-10-12] The Man Who Was Made A People

- [2015-10-07] Bitcoin is Steampunk Economics

- [2015-09-25] The price of the Internet of Things will be a vague dread of a malicious world

- [2015-09-16] What places do we know the most about?

- [2015-09-16] We aren't uniquely self-destructive, just inexcusably so

- [2015-09-10] An article about the gamification of personal finances (with a couple of quotes from yours truly)

- [2015-09-08] The Girl and the Forest

- [2015-08-28] Asking a Shadow to Dance

- [2015-08-17] An article on Revista Ventitrés with a couple of quotes from me

- [2015-08-06] The Telemarketer Singularity

- [2015-07-31] For archival purposes

- [2015-07-23] At the End of the World

- [2015-07-15] Datasthesia: Now with a manifesto!

- [2015-06-24] Memory City

- [2015-06-17] The Balkanization of Things

- [2015-06-04] The Secret

- [2015-06-02] Yesterday was a good day for crime

- [2015-05-25] Materializing data

- [2015-05-22] The Rescue (repost)

- [2015-05-22] The Long Stop

- [2015-05-22] And Call it Justice (repost)

- [2015-05-19] A Room in China

- [2015-05-15] The post-Westphalian Hooligan

- [2015-04-23] Soccer, messy data, and why I don't quite believe what this post says

- [2015-04-04] An Spanish translation of The Rescue

- [2015-03-06] An Spanish translation of And Call it Justice

- [2015-02-16] Quantitatively understanding your (and others') programming style

- [2015-02-13] Why we should always keep Shannon in mind

- [2015-02-12] The most important political document of the century is a computer simulation summary

- [2015-02-03] Posted elsewhere: another look at traffic accidents on La Nación Data, and a short story [Fiction]

- [2015-01-31] In self-referential news...

- [2015-01-23] Hi, Hy!

- [2015-01-18] The nominalist trap in Big Data analysis

- [2014-12-15] Going Postal (in a self-quantified way)

- [2014-11-23] Vaca Muerta and the usefulness of prediction markets

- [2014-11-23] By the way, about this year-long hiatus

- [2013-09-21] Another movie space: Iron Man 3 and Stoker

- [2013-09-04] The changing clusters of terrorism

- [2013-08-19] A short note to myself on Propp-Wilson sampling [Fiction]

- [2013-07-27] The Aliens/The Unbearable Lightness of Being classification space of movies

- [2013-07-24] Latent mini-clusters of movies

- [2013-07-22] Finding latent clusters of side effects

- [2013-02-23] A thing I did

- [2012-12-18] Tom Sawyer, Bilingual

- [2012-12-17] A first look at phrase length distribution

- [2012-12-10] The Premier League: United vs. City championship chances

- [2012-12-10] Chesterton's magic word squares

- [2012-12-10] Barcelona and the Liga, or: Quantitative Support for Obvious Predictions

- [2012-12-07] Magic Squares of (probabilistically chosen) Words

- [2012-12-04] The Torneo Inicial 2012 in one graph (and 20 subgraphs)

- [2012-11-27] New collection of very short stories

- [2012-11-26] Update to championship probabilities

- [2012-11-23] Soccer, Monte Carlo, and Sandwiches

- [2012-09-27] A Case in Stochastic Flow: Bolton vs Manchester City

- [2012-09-20] Washington DC and the murderer's work ethic

- [2012-09-12] How Rooney beats van Persie, or, a first look at Premier League data

- [2012-09-10] Crime in Argentina

- [2012-08-27] Chicago and the Tree of Crime

- [2012-08-20] Bad guys, White Hat networks, and the Nuclear Switch

- [2012-08-02] A new collection of (really) short stories

- [2012-06-11] A flow control structure that never makes mistakes (sorta)

- [2012-04-30] A mostly blank slate

- [2012-02-17] Praefatio --- now with a bookmarklet

- [2012-02-15] The perfectly rational conspiracy theorist

- [2012-02-13] Fractals are unfair

- [2012-01-29] A way to read Wikipedia

- [2012-01-22] A modest, if egocentric, proposal

- [2012-01-17] Men's bathrooms are (quantum-like) universal computers

- [2012-01-16] A quick look at Elance statistics

- [2012-01-13] First post: what I'm interested on these days